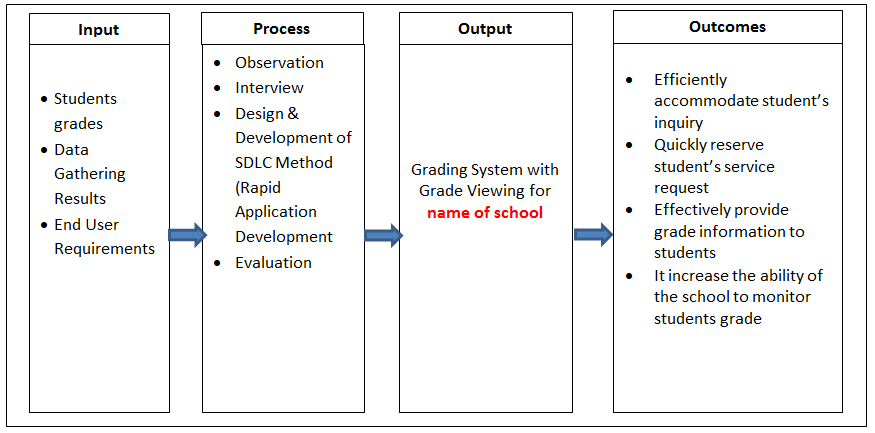

During the last years a large part of my research has been rather data intensive, hundreds of gygabytes of binary data was saved storing final analysis and intermediate results. Recently I had an issue with a data file generated by a chain of R functions and I wasn’t able to retrace the “history” of those data: the only (meta) information I had were the creation date and the long filename that normally I use to convey information about the analysis and the functions I used. Unfortunately, in this case it wasn’t enough and I came up with a partial solution for my R workflow: a save function which stores data with metadata (when, how, where, etc.).

mySave <- function(..., file) { callingF = sys.call(-1) cTime = Sys.time() cWd = getwd() sInfo = Sys.info() metadata = list(callingF, cTime, cWd, sInfo) save(..., metadata, file = file) } |

If I use this function instead of save I will include in the saved data also the original function call, the full date, the path of the working directory and information about the system (including hostname). It is far from be perfect but it is a personal initial step towards making my research full reproducible.